Fine-tuning is taking new shape in the recent days as OpenAI decided to wind down the fine tuning service as well as few other claimed its end of fine tuning era. Large language models are powerful tools. Yet adapting them to new tasks remains difficult. Most current methods update the model’s internal parameters to improve performance on a specific task. While effective at first, this approach often causes the model to forget previous skills, a problem known as catastrophic forgetting. It can also reduce the model’s ability to learn new tasks in the future.

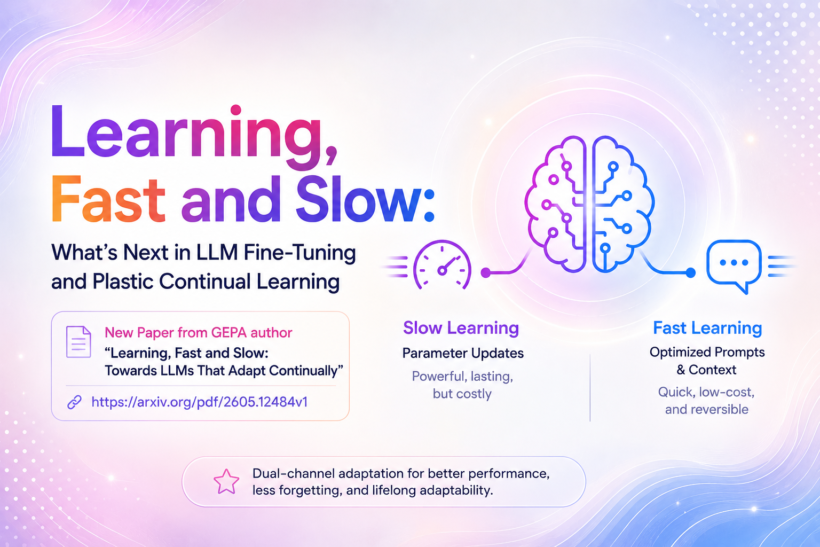

A new paper titled “Learning, Fast and Slow: Towards LLMs That Adapt Continually” presents a promising solution. Early reactions on social media have noted that the paper “splits learning into slow weights and fast, feedback-driven context, yielding big gains in sample efficiency and much less forgetting.” The paper is written by researchers including Lakshya A. Agrawal, one of the key authors behind the earlier GEPA method. The full paper is available at https://arxiv.org/pdf/2605.12484v1.

The Idea: Fine-Tuning Should Use Two Brains

The authors propose using two complementary ways to adapt large language models at the same time. The first is slow learning through updates to the model parameters. These changes are powerful and lasting but expensive and can reduce the model’s flexibility. The second is fast learning through optimized prompts and textual context. These changes are quick, low cost, and fully reversible.

Instead of choosing only one approach, the method lets both work together. Fast prompts capture task specific details while the slow parameter updates focus on deeper, more general improvements. This idea is inspired by earlier concepts in neural networks that separate temporary adaptations from long term knowledge.

How the Method Works

The approach combines reinforcement learning for parameter updates with GEPA, a reflective evolutionary prompt optimization technique. It runs in repeating cycles. In each cycle, GEPA first evolves a small set of effective prompts using the current model. Then the method performs several steps of reinforcement learning while using these improved prompts as additional context. This loop allows the fast prompts to absorb recent task specific lessons quickly. As a result, the model parameters do not need to store every detail. The core model stays closer to its original capabilities and retains more flexibility for future learning.

Key Benefits

The paper highlights several important advantages of this dual channel method compared to standard reinforcement learning approaches alone.

- Improves data efficiency, reaching strong performance with fewer training steps.

- Achieves a higher final performance level across the tested tasks.

- Updated model stays closer to the original base model, which helps preserve existing knowledge.

- Maintains better plasticity, meaning the model can still learn new tasks effectively after training on a previous one.

- Supports true continual learning, allowing the model to keep improving when task domains change over time.

These benefits are especially relevant for building AI systems that need to evolve with changing requirements.

Limitations

The experiments use 8B scale models and focus on specific reasoning tasks including code output prediction, math reasoning, and multi hop fact verification. Results may not extend directly to much larger models or other domains. The prompt evolution step adds some extra computation during training. The authors present the method as a useful extension to existing reinforcement learning pipelines rather than a replacement for all post training approaches. Further research is needed to explore its scaling and broader applicability.

Timely Context: Recent Developments in Fine-Tuning

Recent discussions in Fine Tuning reflect a changing landscape for LLM fine-tuning. OpenAI is winding down its self-serve fine-tuning platform, with new training jobs restricted and the service ending for new use by January 2027. Many in the community see this as a move toward greater reliance on prompt engineering, context management, and RAG. At the same time, there is increased interest in open-weight models and custom post-training, especially for domain-specific agents and long-horizon tasks.

The dual-channel approach in this paper offers a practical path for efficient and continual adaptation in this new environment.

Next Frontier in Fine Tuning

For AI and machine learning practitioners and software engineers working with large language models, this paper encourages a shift in how we think about fine tuning. By letting optimized prompts share the adaptation load, it becomes possible to train more efficiently while keeping the model adaptable over time. This dual channel perspective could lead to lower training costs, more reliable systems, and large language models that continue to improve without the common drawbacks of forgetting or rigidity.

The complete paper, including all methods and supporting details, is available here: https://arxiv.org/pdf/2605.12484v1.

This research points toward a practical path for developing large language models that are not only strong but also genuinely adaptable throughout their lifetime. Looking forward to use this in some of the use cases if applicable but for now enjoy the paper from great authors.