Last week, I returned to London from the San Francisco Bay Area with renewed energy, fresh insights, and a deep appreciation for the people driving artificial intelligence forward. Since back, […]

PyFlue 0.2.0: Bringing Flue’s Agent Runtime Model to Python

PyFlue 0.2.0 is now available. This release is a major step toward parity with the TypeScript Flue framework and introduces a clearer runtime model for building production-oriented Python agents. The […]

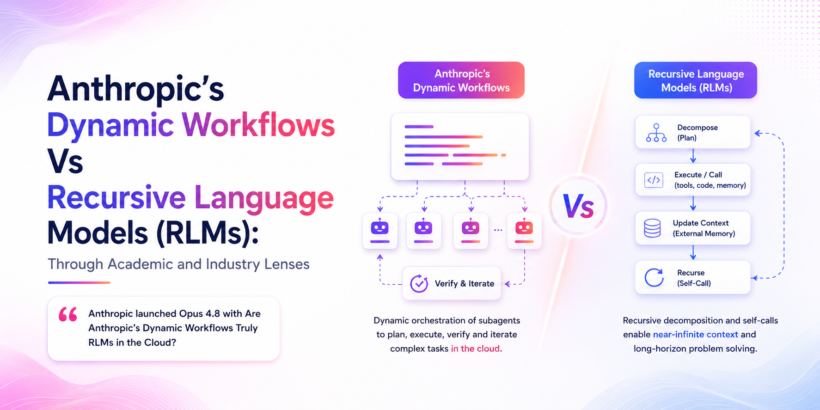

Dynamic Workflows Vs Recursive Language Models (RLMs): Through Academic and Industry Lenses

Anthropic launched Opus 4.8 with Are Anthropic’s Dynamic Workflows Truly RLMs in the Cloud? Anthropic recently launched the Opus 4.8, the smartest model which has highly capable coding task and […]

CodexOpt Brings Microsoft SkillOpt to Codex: Optimizing Agent Skills with Execution Feedback

Microsoft Research released the SkillOpt paper. The work has generated considerable discussion across the AI community. Researchers and engineers highlight its disciplined approach to improving agent capabilities without modifying model […]

Grok Build Enters the Agentic Coding Arena with Official Grok CLI: Game ON

xAI has officially entered the agentic coding arena. On May 14, 2026, the company launched an early beta of Grok Build, its native agentic command-line interface (CLI). In the launch […]

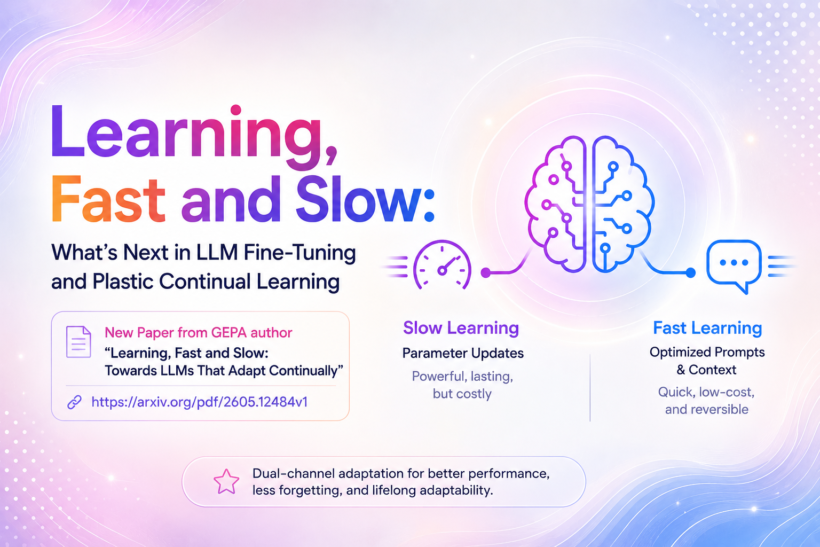

Learning, Fast and Slow: What’s Next in LLM Fine-Tuning and Plastic Continual Learning with GEPA

Fine-tuning is taking new shape in the recent days as OpenAI decided to wind down the fine tuning service as well as few other claimed its end of fine tuning […]

The Rise of Agent Harness Frameworks and Harness As a Service (HaaS)

The rapid maturation of agentic AI in 2026 has shifted recently to the models to the scaffold around it. While model capabilities continue to advance, the decisive factor in building […]

London Agentic AI x Tessl: Community Partner for AI Native DevCon London 2026

Excited to announce that London Agentic AI is officially joining AI Native DevCon London 2026 as a Community Partner. This partnership is personally meaningful to me because I have been […]

Whats New in PyFlue v0.1.3?

Thrilled to announce the release of PyFlue v0.1.3. This major update introduces significant enhancements to the framework. PyFlue is the official Python port of the recently launched Flue framework (flueframework.com), […]

Introducing PyFlue: The Python-Native Agent Harness Framework Inspired by Flue.

The CEO of HTML, Fred Schott released Flue , the TypeScript community quickly recognized its significance. A true agent harness framework with Markdown-driven skills, headless and programmable design, zero-config sandboxing, and […]